Chinese law enforcement linked to largest covert influence operation ever discovered

Meta announced on Tuesday the removal of thousands of fake accounts from Facebook that were operated as part of “the largest known cross-platform covert influence operation in the world,” and which researchers believe is linked to individuals associated with Chinese law enforcement.

Despite the campaign’s unprecedented size and spread — detailed in the company’s latest adversarial threat report — its spammy attempts to shape public opinion were generally of very low quality, and often failed to reach their targeted audiences in Taiwan, the United States, Australia, the United Kingdom and Japan.

The network was uncovered by linking separate clusters of fake posts and propaganda that had been tracked since 2019, including an operation named Spamouflage by researchers at the social media analytics business Graphika. Experts at Graphika — including Ben Nimmo, the company’s director of investigations who has since become Meta’s global threat intelligence lead — first exposed Spamouflage in 2019.

While this reporting helped push the network’s activities off of the major platforms, Meta has since tied together several campaigns occurring across a wider range of smaller forums that it says were actually part of a single cross-platform covert influence operation, which it has also called Spamouflage.

“Although the people behind this activity tried to conceal their identities and coordination, our investigation found links to individuals associated with Chinese law enforcement,” said Meta.

The threat report follows the U.S. Department of Justice in April announcing charges against 34 officers in China’s national police for creating fake online personas to harass critics of the Chinese Communist Party based in the United States and to spread Beijing’s propaganda and narratives overseas.

According to the DOJ, the officers working with the Ministry of Public Security “were assigned to an elite task force called the ‘912 Special Project Working Group’ … a troll farm that attacks persons in our country for exercising free speech in a manner that the PRC government finds disagreeable, and also spreads propaganda whose sole purpose is to sow divisions within the United States.”

Jack Stubbs, Graphika’s vice president of intelligence, told Recorded Future News that the business saw “limited open source connections between Spamouflage and the activity described in the DOJ indictment. Those connections are currently low confidence and our investigation is ongoing.”

Responding to a question from Recorded Future News during a call with journalists, Nimmo confirmed that the social media aspects of the DOJ’s indictment were part of the Spamouflage campaign, however he said the company couldn’t be too specific about the technical indicators it used to attribute the links to Chinese law enforcement.

High volume, low-quality, low reach

According to Meta, the operators behind the campaign worked in geographically dispersed locations across China while being centrally provisioned with internet access and content directions — including sharing internet proxy infrastructure, despite the operators of these fake accounts being based hundreds of miles apart.

This activity echoes that alleged in the DOJ indictment, which notes how multiple accounts operated by the 912 Group on Facebook used the same device and IP address (resolving to an internet service provider based in Texas) to defame a prominent expatriate critic of the Chinese Communist Party.

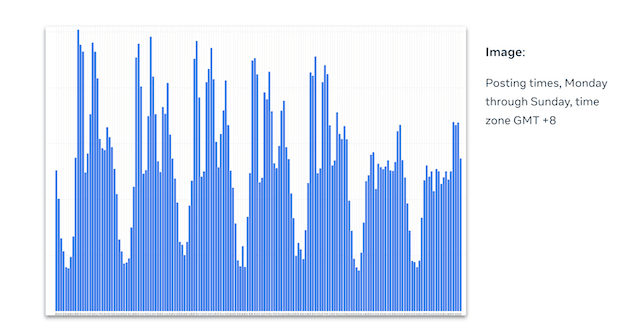

Meta said the behavior of these clusters indicated they were operated by groups potentially sharing an office space, for instance working “to a clear shift pattern, with bursts of activity in the mid-morning and early afternoon, Beijing time, with breaks for lunch and supper, and then a final burst of activity in the evening.”

The daily posting pattern for the Spamouflage operation. Image: Meta

The daily posting pattern for the Spamouflage operation. Image: Meta

Despite its size and the resources behind it, the influence operation does not appear to have been particularly effective — although the DOJ noted multiple distressing attempts to harass the family members of Chinese nationals who criticized Beijing from abroad.

Among the stories the operation repeatedly shared — including to a niche and largely inactive subforum for Pakistani government employees — was a poorly written English-language post headlined “Queen Elizabeth II Dead or Related to New Prime Minister Truss?" that intended to suggest the now former prime minister may have “displeased the Queen and hastened her death.”

Search results for that same headline show the content being cross-posted to Flickr, Blogspot, TikTok, Instagram and Medium, although none of these occurrences appear to have received significant engagement.

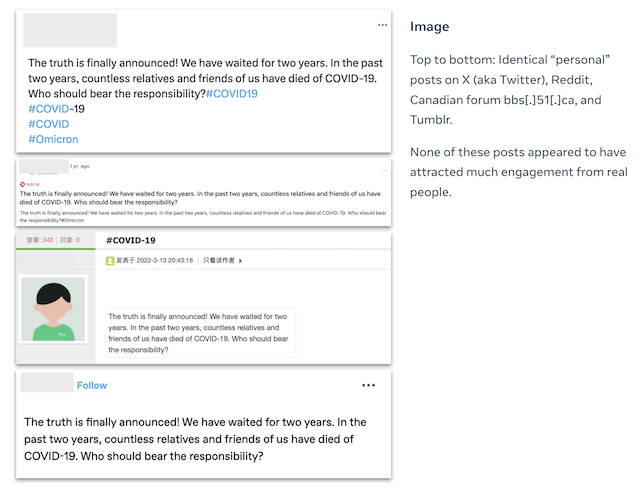

Meta noted the network’s tactic of “spraying” the same article across different platforms made it more resilient against takedowns, but suggested this benefit could have been accidental for the operators who “may simply have been trying to achieve a production quota for their campaign.”

The repeated use of “highly distinctive headlines makes [the operation] particularly vulnerable to cross-platform, open-source investigation,” noted Meta, citing the above headline alongside others with typos and linguistic mistakes such as “Rummors and truth of COVID-19” and “Guo Wengui's Lies, Little Ant's Drugs” being easy to track.

Examples of reused content in the Spamouflage campaign. Image: Meta

In total, Meta removed 7,704 Facebook accounts, 954 Pages, 15 Groups and 15 Instagram accounts that formed part of what was described as a “prolific” operation spanning more than 50 other platforms and forums.

In another indication of the operators working to potentially inefficient quotas, Meta noted that the pages it had on Facebook, with around 560,000 followers, “were likely acquired from spam operators with built-in inauthentic followers primarily from Vietnam, Bangladesh and Brazil” meaning the pages “that mainly posted in Chinese and English were almost exclusively followed by accounts from countries outside of their target regions.”

Alexander Martin

is the UK Editor for Recorded Future News. He was previously a technology reporter for Sky News and a fellow at the European Cyber Conflict Research Initiative, now Virtual Routes. He can be reached securely using Signal on: AlexanderMartin.79