Designed for peacetime, not war:' How Ukraine is forcing companies to rethink content moderation

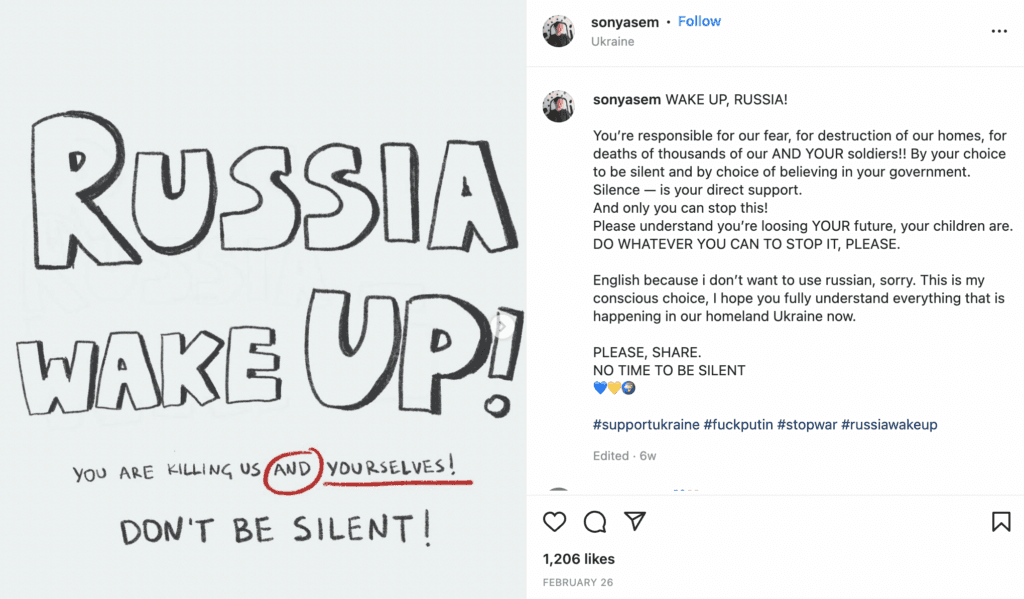

Before Russia invaded Ukraine, artist Sonya Sem posted on her Instagram images of abstract paintings, photos of her dog, and selfies about self-love and creativity. Then, everything changed in February, when Russia dropped bombs on her native Kyiv.

Since the start of the war, Instagram has become one of the main platforms for Sonya and 17.3 million other Ukrainian users to tell the world about their pain and fears. Sonya also used it to raise money for the Ukrainian army — she sold her art and Ukraine-themed merchandise.

Like many Ukrainians writing online about Russia’s atrocities, Sonya is aware of Instagram’s content moderation policy that restricts access to “sensitive” content — photos that “could potentially be upsetting to some people,” as Instagram put it in an announcement last year.

Ukrainians raised concerns about Instagram and Facebook content controls even before Russia’s invasion, but they became more insistent when the photos of dead bodies found in Bucha flooded the internet.

Bucha, where Sonya spent most of her childhood, came into the spotlight when Russia withdrew its troops from the region, leaving over 320 dead bodies of civilians lying in the streets. Many world leaders, including President Joe Biden, called Russia's atrocities in Bucha a war crime.

The Kremlin said that photos of dead civilians and mass graves were "staged" by Ukrainian authorities after Russian troops left the town on March 31. Satellite images published by The News York Times show that some bodies were lying there for nearly two weeks before Russian troops left the town.

“It’s hard to believe our eyes. We see this reality through our Instagram feed,” Sonya wrote on April 3. But every time she posted a photo showing the massacre in Bucha, Instagram censored it and labeled the picture as “sensitive content.”

“As a society, we still struggle with what are appropriate images for people to see to understand the extent of the war.”

— Katie Harbath, CEO of Anchor Change and the former public policy director at Facebook

“We are all trapped in this box of pain together,” Sonya wrote, referring to her art showing two Instagram stories with censored pictures. After it went viral, Sonya decided to sell her art as an NFT — for $262 each — donating the money to the Ukrainian military, she said.

Russia’s war in Ukraine has raised new questions about the responsibility of social media and its power to shape the narrative of the conflict. “As a society, we still struggle with what are appropriate images for people to see to understand the extent of the war,” Katie Harbath, CEO of Anchor Change and the former public policy director at Facebook told The Record.

On one side of this conflict is Meta — Facebook and Instagram’s parent company — a global giant with an established set of rules and policies requiring its content moderators to stick to them regardless of personal feelings.

But on the other side, there are Ukrainians who mostly see what’s happening in the besieged cities through the lenses of social media. For Russians, in turn, whose government called Meta “an extremist” organization, social media is one of a few places to learn what their troops are up to in Ukraine.

Should Meta continue to hide the disturbing pictures behind “the box of pain?” The Ukrainian government strongly disagrees.

“Meta’s policy was designed for peacetime, not war,” said Anton Melnyk, an expert on innovation and startups at the Ukrainian Ministry of Digital Transformation. “War changes people: when their homes are destroyed and loved ones are killed, they don't think properly about how to communicate online.”

“If Meta uses obsolete rules, it can bar all Ukrainians from speaking up about the war on its platforms,” Melnyk told The Record.

Changing rules, internal confusion

At the beginning, Meta’s response to the war in Ukraine received positive feedback from local experts and Ukraine’s President Volodymyr Zelensky.

War is not only a military opposition on UA land. It is also a fierce battle in the informational space. I want to thank @Meta and other platforms that have an active position that help and stand side by side with the Ukrainians.

— Володимир Зеленський (@ZelenskyyUa) March 13, 2022

“Meta has proved to be not only a business-oriented company but also a socially responsible one,” said Solovey Igor, head of the Ukrainian Center for Strategic Communications and Information Security. “It took the right side from day one, promptly taking down fakes spread by Russia,” he told The Record.

Internally, however, Meta’s lack of consistency in what kinds of posts were allowed about the war in Ukraine caused confusion among its content moderators, The New York Times reported on March 31.

Meta has made more than half a dozen content policy revisions since Feb. 24, according to the reports, pressured by both Russia and Ukraine.

Since the first day of the war, the Ukrainian Ministry of Digital Transformation (MinDigital) sent emails to Meta, according to Melnyk, asking it to block Kremlin propaganda on Facebook and Instagram and to allow Ukrainians to express their hatred more openly about the war with Russia.

In a temporary change to a hate speech policy, Meta allowed users in Eastern European countries to call for violence against Russian soldiers and wish death to Russia’s President Vladimir Putin or Belarusian President Alexander Lukashenko, Reuters reported on March 10, citing internal emails.

Later on March 14, Reuters reported that Meta prohibited calls for the death of a head of the state.

A Ukrainian government official — who works closely with Meta representatives in Ukraine and the US and asked to remain anonymous — told The Record that Meta changed the policy after allegations of human rights violations from the United Nations.

“We try to think through all the consequences, and we keep our guidance under constant review because the context is always evolving,” Nick Clegg, the president for global affairs at Meta wrote in an internal memo obtained by Bloomberg.

Moderating war content is difficult no matter who is releasing the information — the government, the media or a tech platform, according to Harbath. “It would be good for all tech companies to do a bit more planning for situations like these — such as how you can keep content moderators up to speed on rapidly changing policies,” she added.

“Meta’s policy was designed for peacetime, not war. War changes people: when their homes are destroyed and loved ones are killed, they don't think properly about how to communicate online.”

— Anton Melnyk, Ukrainian Ministry of Digital Transformation

One of the reasons for Meta’s chaotic policy is the lack of “systematic communication” between the company and the Ukrainian government, said Dmytro Zolotukhin, former deputy minister of Ukraine’s information policy.

“This lack of communication is preferable to Meta because it allows the company to avoid responsibility for the inaction,” Zolotukhin told the Record.

People in charge

To moderate war-related content in Ukraine, Meta established “a special operations center” staffed by the company’s experts. They are “working around the clock to monitor and respond to this rapidly evolving conflict in real-time,” said Magdalena Szulc, Meta’s communications manager in the European region.

In total Facebook has more than 40,000 people working in the safety and security department, of which, about 15,000 are content reviewers. In Europe Meta’s employees are working from Warsaw, Riga, Dublin and Barcelona. The company doesn’t have an office in Ukraine and “contrary to some myths” it doesn’t have moderators working in Moscow and never had an office there, Szulc told The Record.

Meta’s chief executive Mark Zuckerberg and chief operating officer Sheryl Sandberg have been directly involved in the company’s response to the war In Ukraine, according to The New York Times. Publicly, however, the company’s policy in Ukraine and Russia was the responsibility of Nick Clegg.

When the Russian government designated Meta as an extremist organization earlier in March, Clegg wrote on Twitter that Meta has no quarrel with the Russian people and will not tolerate any kind of discrimination, harassment, or violence toward Russians on its platform. He hasn’t tweeted about the war in Ukraine since then.

Although Meta doesn’t have an office in Ukraine, its team of content reviewers includes native Ukrainian speakers “who understand the local context,” according to Szulc. “It often takes a local to understand the specific meaning of a word or the political climate in which a post is shared,” she told the Record.

Ukrainians had been asking Facebook to appoint representatives in Ukraine since 2015. “At that time we had scandals regarding the company’s content moderation policy in the country,” Zolotukhin said. Finally, in 2019, Facebook appointed its first public policy manager for Ukraine — Kateryna Kruk. “She’s very patriotic and professional,” according to Zolotukhin.

“Kateryna is doing a great job, but she cannot handle everything alone,” Melnyk told The Record. To help her, Meta partnered with local third-party fact-checking organizations, including Vox Ukraine and Stop Fake, providing them with additional financial support.

When Zelensky came to power in 2019 the Ukrainian authorities became more involved in Meta’s policy in the region. MinDigital, headed by a 33-year-old Mykhailo Fedorov, has made Facebook one of the main communication platforms for Zelensky’s government, using it to reach wider audiences.

Tech vs. people

Both in a crisis and in normal times, Meta uses a combination of artificial intelligence, human content reviewers and user reports to identify and remove harmful content.

Sometimes the system breaks. Earlier in March, a group of Facebook engineers identified a bug that instead of suppressing misinformation, promoted it for months, according to an internal report on the incident obtained by The Verge.

During the bug period, Facebook, among other things, failed to downgrade the content shared by Russian state media that Meta pledged to stop recommending in response to the war in Ukraine.

Because part of Meta’s content moderation is automated, this can have unexpected consequences. For example, some Ukrainian activists, volunteers and media channels were blocked after being reported by Russian users, according to Melnyk.

Meta also has temporarily blocked hashtags like #Bucha and #BuchaMassacre saying that other users reported that this content “may not meet community guidelines.”

Another problem, according to Melnyk, is the location-based ban, meaning that some propagandistic content is only banned for Ukrainian users but can be viewed in other countries.

Although it may seem like Meta didn’t have a thought-out plan for the war in Ukraine, experts say it’s hard to have one given that conflicts in each country are different. “In the ideal world people want one set of rules, but that just isn’t how it works,” Harbath said.

“We need to understand that and think about how we want these companies to be transparent in the values they have when making any changes or exceptions and how they implement them,” she told The Record.

Daryna Antoniuk

is a reporter for Recorded Future News based in Ukraine. She writes about cybersecurity startups, cyberattacks in Eastern Europe and the state of the cyberwar between Ukraine and Russia. She previously was a tech reporter for Forbes Ukraine. Her work has also been published at Sifted, The Kyiv Independent and The Kyiv Post.