GitHub to review its exploit-hosting policy in light of recent scandal

Code-hosting platform GitHub has asked the infosec community to provide feedback on a series of proposed changes to the site's policies that dictate how its employees will deal with malware and exploit code uploaded to its platform.

According to the proposed changes, GitHub wants clearer rules on what can be considered code used for vulnerability research and code abused by threat actors for attacks in the real world.

Currently, this line is blurred. Anyone can upload malware or exploit code on the platform and designate it as "security research," with the expectation that GitHub staff would leave it alone.

GitHub is now asking project owners to clearly designate the nature of their code and if it could be used to harm others.

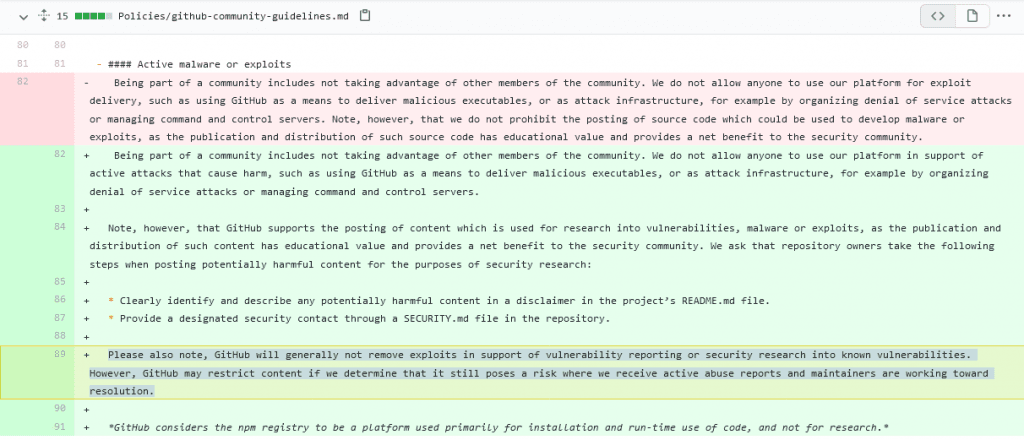

But more importantly, GitHub is advocating for the ability to intervene in certain cases and restrict or remove legitimate vulnerability research code that is being abused in the wild for attacks. [see below]

"These updates [...] focus on removing ambiguity in how we use terms like 'exploit,' 'malware,' and 'delivery' to promote clarity of both our expectations and intentions," said Mike Hanley, Chief Security Officer at GitHub.

Hanley and GitHub are now encouraging members of the cybersecurity community to provide feedback (here) on where the line between security research and malicious code should be.

Aftermath of the ProxyLogon PoC debacle

The changes to GitHub's policies proposed today are a direct result of a recent scandal dating back to last month.

In early March 2021, Microsoft, GitHub's parent company, disclosed a series of bugs known as ProxyLogon that were being abused by Chinese state-sponsored hacking groups to breach Exchange servers across the world.

The OS maker released patches, and a week later, a security researcher reverse-engineered the fixes and developed a proof-of-concept exploit code for the ProxyLogon bugs, which he uploaded on GitHub.

Six hours after the code was uploaded on GitHub, Microsoft's security team intervened and removed the researcher's code in a move that sparked an industry-wide outcry and widespread criticism against Microsoft.

Even tho @Microsoft decided to wield their power to take down an exchange POC it was up for more than long enough and should be considered in the wild now https://t.co/3DKK0UR6tF

— Chase Dardaman (@CharlesDardaman) March 10, 2021

The ProxyLogon PoC has been removed by Microsoft from https://t.co/phMQFwpuHQ, but is still available on https://t.co/NhspqTdgXJ https://t.co/bX0u8u1D9Z

— Florian Roth (@cyb3rops) March 11, 2021

The thinking behind Microsoft's move was that it was merely protecting Exchange server owners from attacks that would have weaponized the researcher's code.

While GitHub allowed the researcher and others to re-upload the exploit code, the company would like to remove this ambiguity in its platform policy and allow itself to intervene for the general good.

Outcome: TBD

Currently, it is unclear if GitHub actually plans to listen to the feedback it will receive or if this is just a public charade, and the company intends to apply the changes it already proposed, as they are, with the ability to intervene whenever it feels that certain code might be abused for attacks.

Just a few hours after GitHub's announcement, the company's decision has already sparked heated debates online, with many split opinions.

Hey @metasploit and @rapid7 your code is used in exploits and attacks all the time even tho it’s widely considered good how will you react to being deplatformed over it?

— Chase Dardaman (@CharlesDardaman) April 29, 2021

Some are on board with the company's proposed changes, while others feel like the current state of affairs is just fine — where users can report blatantly malicious code to GitHub to have it taken down and leave proof-of-concept exploit code on the platform, even if it's being abused.

The thinking behind leaving exploit code on the platform simple, and is that this code often gets reposted on similar platforms, and being on GitHub does not mean the code won't be weaponized by threat actors, who have a ton of other options to get their hands on exploits if they ever want to.

"The community knows what's malicious and not, to be honest," John Jackson, a Senior Application Security Engineer at Shutterstock, told The Record today.

"We need malware, exploits, PoCs, tooling. They will sink a lot of people if they go through with this. I hope they make the right choice and do not become the Github police."

Catalin Cimpanu

is a cybersecurity reporter who previously worked at ZDNet and Bleeping Computer, where he became a well-known name in the industry for his constant scoops on new vulnerabilities, cyberattacks, and law enforcement actions against hackers.