Hackers use ChatGPT phishing websites to infect users with malware

Cybercriminals are taking advantage of ChatGPT’s popularity to distribute malware and carry out cyberattacks, researchers have found.

Since the moment it launched, ChatGPT has raised concerns among cybersecurity experts about its potential to aid criminals in writing malicious code. But according to a report published this week by threat intelligence company Cyble, bad actors are also setting up phishing websites mimicking the AI tool’s branding.

Researchers at Cyble have found several cases where hackers created fake websites with ChatGPT’s icon and name to spread various types of Android malware and steal credit card information.

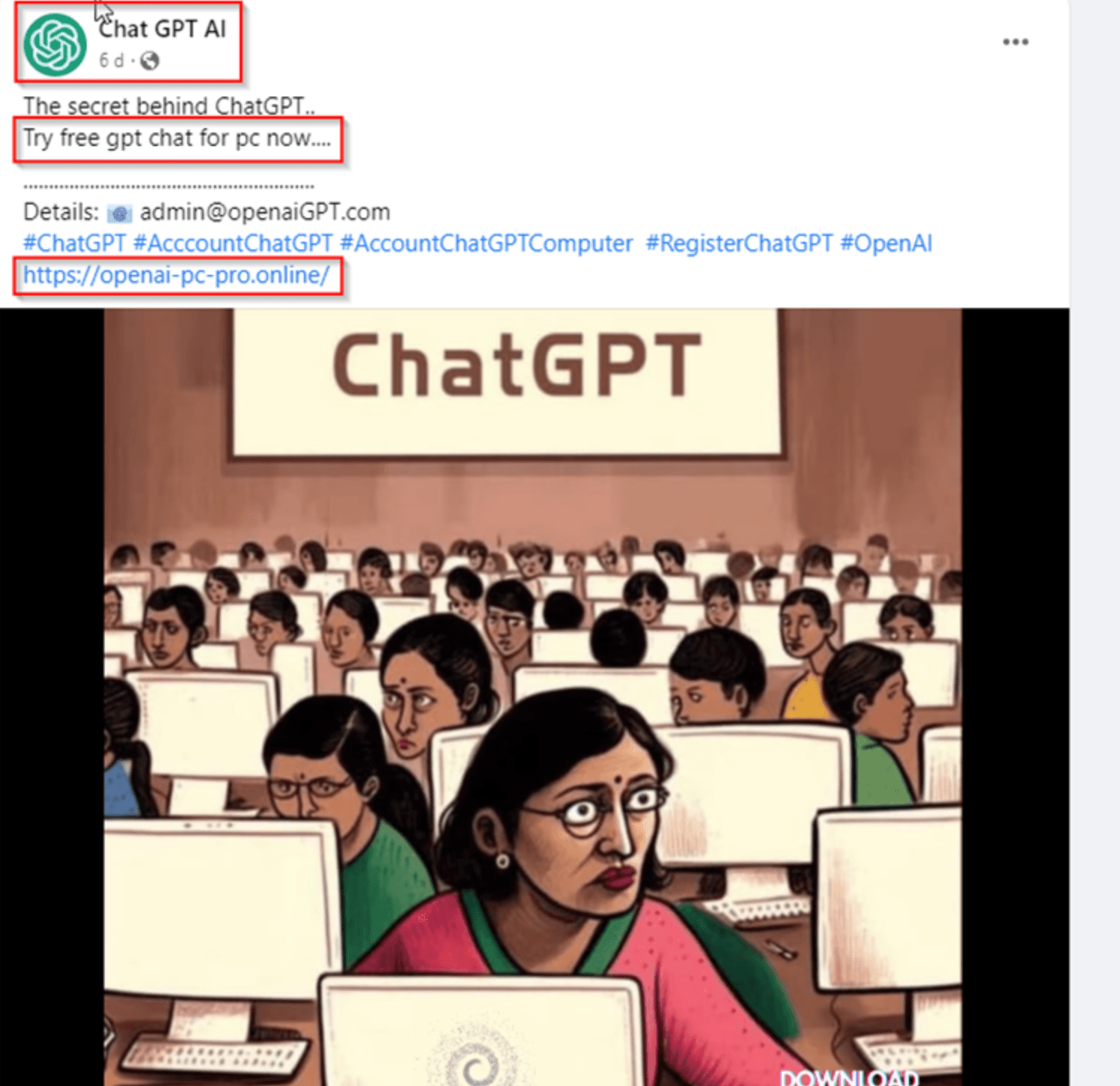

These websites were promoted through an unofficial ChatGPT social media page with over 3,500 followers.

The page tried to look authentic by posting content, such as videos and text, about different AI tools. Some of the posts contained links to the fake websites of ChatGPT or its developer, OpenAI.

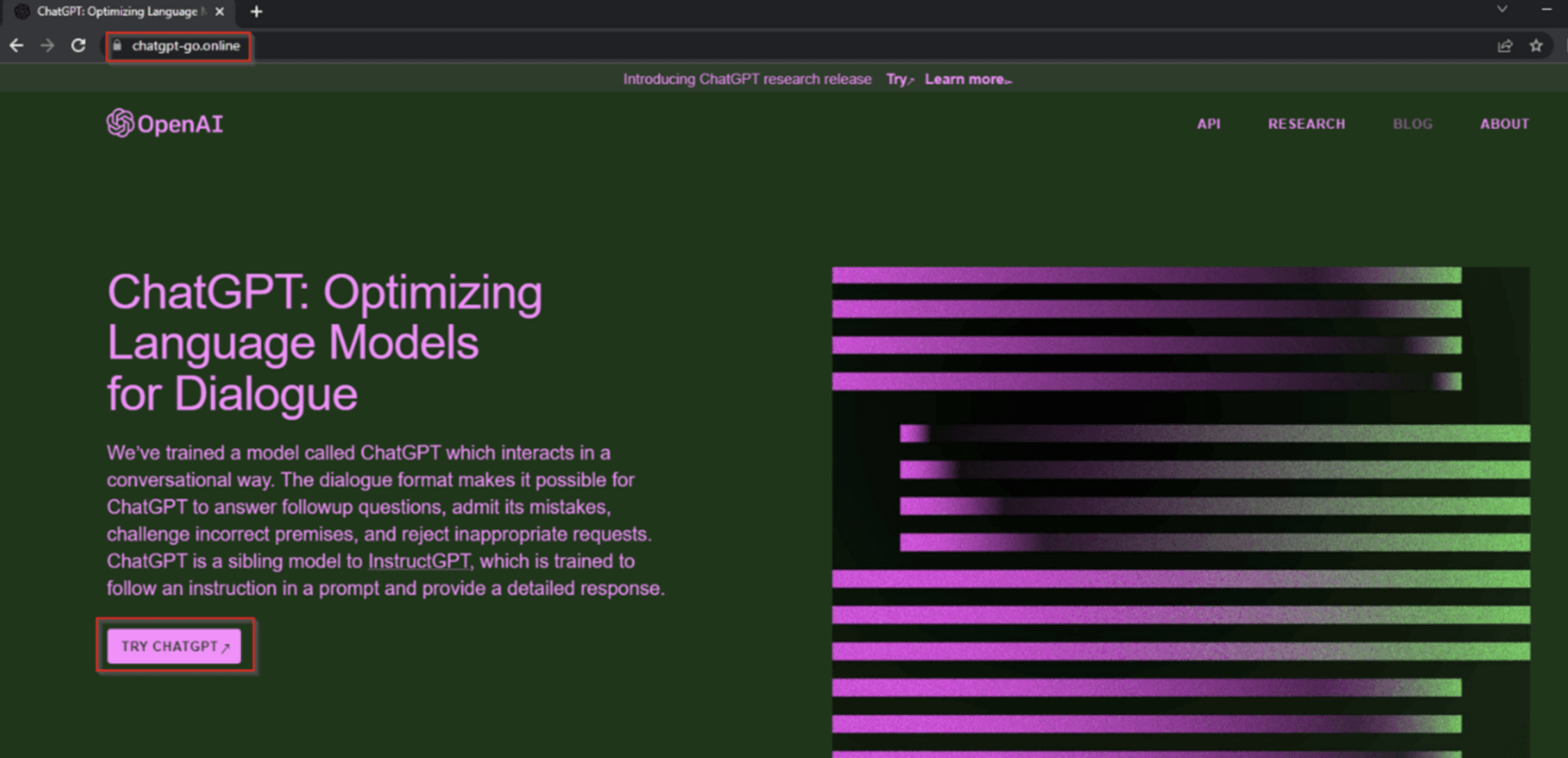

When the users clicked the “Download for Windows” or “Try ChatGPT” buttons on a phishing website, a malicious file with stealer malware was automatically downloaded to their devices. Once the malware was executed, it could collect sensitive data without the victim’s knowledge.

Researchers found that these phishing sites distributed several notorious malware families, including Lumma Stealer, Aurora Stealer, and clipper malware designed to target cryptocurrency transactions.

In addition to hosting information-stealers and other malware, hackers also used ChatGPT and OpenAI-based phishing websites to commit financial fraud.

One common tactic has involved creating fake ChatGPT-related payment pages that were designed to steal victims’ money and credit card information.

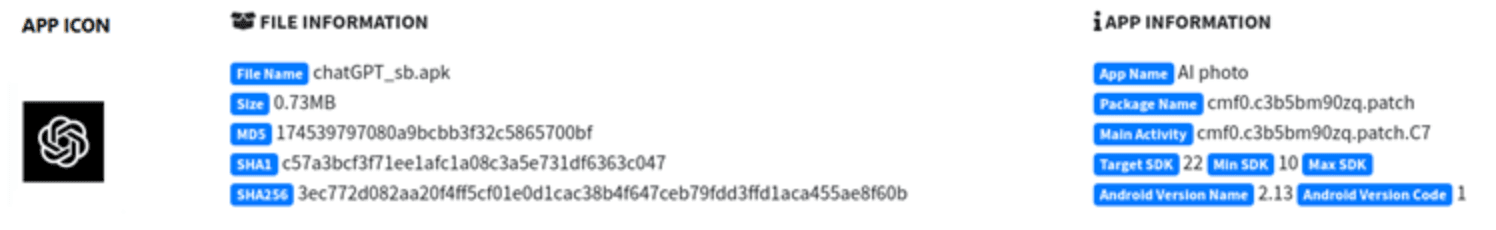

Researchers have also identified over 50 fake and malicious apps that used the ChatGPT icon to spread adware and spyware or commit telecom fraud whereby scammers attempted to steal money by placing fraudulent charges on a victim's phone bill.

“Users who fall victim to these malicious campaigns could suffer financial losses or even compromise their personal information, causing significant harm,” Cyble said.

When asked by The Record, ChatGPT generated a response that it's aware of fake ChatGPT apps spreading malware and recommends users only download apps from trusted sources, such as the Apple App Store or Google Play Store.

If a user suspects that they have downloaded a fake ChatGPT app or any other app that may contain malware, they should immediately uninstall the app and run a virus scan on their device. They should also change any passwords that may have been compromised as a result of the malware, the AI program said.

The program is also aware of how it could be exploited by hackers. When asked, ChatGPT generated a response saying that threat actors could use its capabilities for phishing and social engineering attacks or to create text that contains malicious code.

Daryna Antoniuk

is a reporter for Recorded Future News based in Ukraine. She writes about cybersecurity startups, cyberattacks in Eastern Europe and the state of the cyberwar between Ukraine and Russia. She previously was a tech reporter for Forbes Ukraine. Her work has also been published at Sifted, The Kyiv Independent and The Kyiv Post.